The amyloid-cascade hypothesis has governed Alzheimer’s research for over three decades. Introduced in 1992 by Hardy and Higgins, it proposes a clean, linear story: amyloid-beta peptide accumulates, aggregates, forms plaques, and those plaques cause the disease1. One of the most influential ideas in modern biomedicine. Billions of dollars in drug development have followed its logic downstream.

In 2006, a Nature paper by Lesné et al. identified a specific amyloid subtype — Aβ*56 — as the key toxic species responsible for cognitive decline2. The paper became a cornerstone. It was cited over 2,300 times. It lent molecular specificity to a hypothesis that had been operating on generalities. And specificity is what turns a hypothesis into a pipeline.

In 2022, a Science investigation found that the paper’s key images appeared to have been manipulated3. For sixteen years, the field had treated Lesné et al. as independent confirmation of a causal mechanism. It was not independent. It was one data point — and it was compromised. But the hundreds of papers citing it, the drug programs downstream of it, the review articles that wove it into the consensus narrative — none of them had a way to ask: “How much of what we believe actually depends on this single result?”

They couldn’t ask because the question is inexpressible in the current infrastructure of science. We have citation graphs — PubMed can tell you that 2,300 papers referenced Lesné et al. What it cannot tell you is which of those papers would need to change their conclusions if Lesné’s data were wrong. A citation graph records who read what. A dependency graph records what breaks — and how far the damage propagates. No such graph exists.

The amyloid story is not an edge case. It is a portrait of how scientific knowledge actually works: claims accumulate through citation, consensus forms through repetition, and the structural dependencies that hold the whole thing together are invisible by default. As Behl (2024) writes in a recent reassessment: after three decades and despite massive investment, the amyloid-cascade hypothesis “still remains a working hypothesis, no less but certainly no more.”4 The evidence was always weaker than the consensus suggested. No one had the infrastructure to see it.

This is not just an Alzheimer’s problem. It is the default condition of evidence-based reasoning — and it gets worse when the reasoning is automated.

The Flattened Answer

Now take this failure mode and automate it: synthesize thousands of papers into a single paragraph, with no dependency graph underneath.

Ask an LLM: “Does amyloid-beta accumulation cause Alzheimer’s?” You’ll get a confident, well-cited paragraph. Ask again next month. The wording will change. The citations might change. The confidence will shift. You will have no way to know which underlying evidence changed, or whether the model just sampled a different path through latent space. The answer drifted, and the reason is a void5.

This is what we call the Flattened Answer: a response that looks like knowledge but behaves like a hallucination — static, opaque, and brittle. It strips out every piece of reasoning structure that a rigorous thinker would actually care about: the contradictions, the dependencies, the load-bearing assumptions, the specific experiment that would change your mind.

Here’s the thing nobody in the “AI for science” conversation is saying clearly enough: the problem is not that the models are bad at synthesizing evidence. The problem is that synthesis, as currently implemented, is architecturally incapable of being audited. The amyloid field didn’t need better AI. It needed a system that could answer “what breaks if this paper breaks?” — and no such system exists.

The Model Has a Belief. You Get a Paragraph.

Internally, LLMs are not shallow. Transformers appear to represent belief states as geometric coordinates in high-dimensional space — structures real enough to be found, intervened on, and causally verified6. In Othello-GPT, a model trained only on move sequences, researchers recovered a full board-state representation from the activations and pushed on individual directions to predictably change the model’s play7. The model had never seen a board. It built one anyway. These models don’t just autocomplete. They model.

But this structure is locked inside activation spaces that no one can read. The model has a belief. You get a paragraph. The reasoning that connects one to the other is inaccessible by construction.

Better prompting will not fix this. Retrieval-augmented generation will not fix this. These are patches on an architecture that was never designed to make belief legible.

What Would Fix It

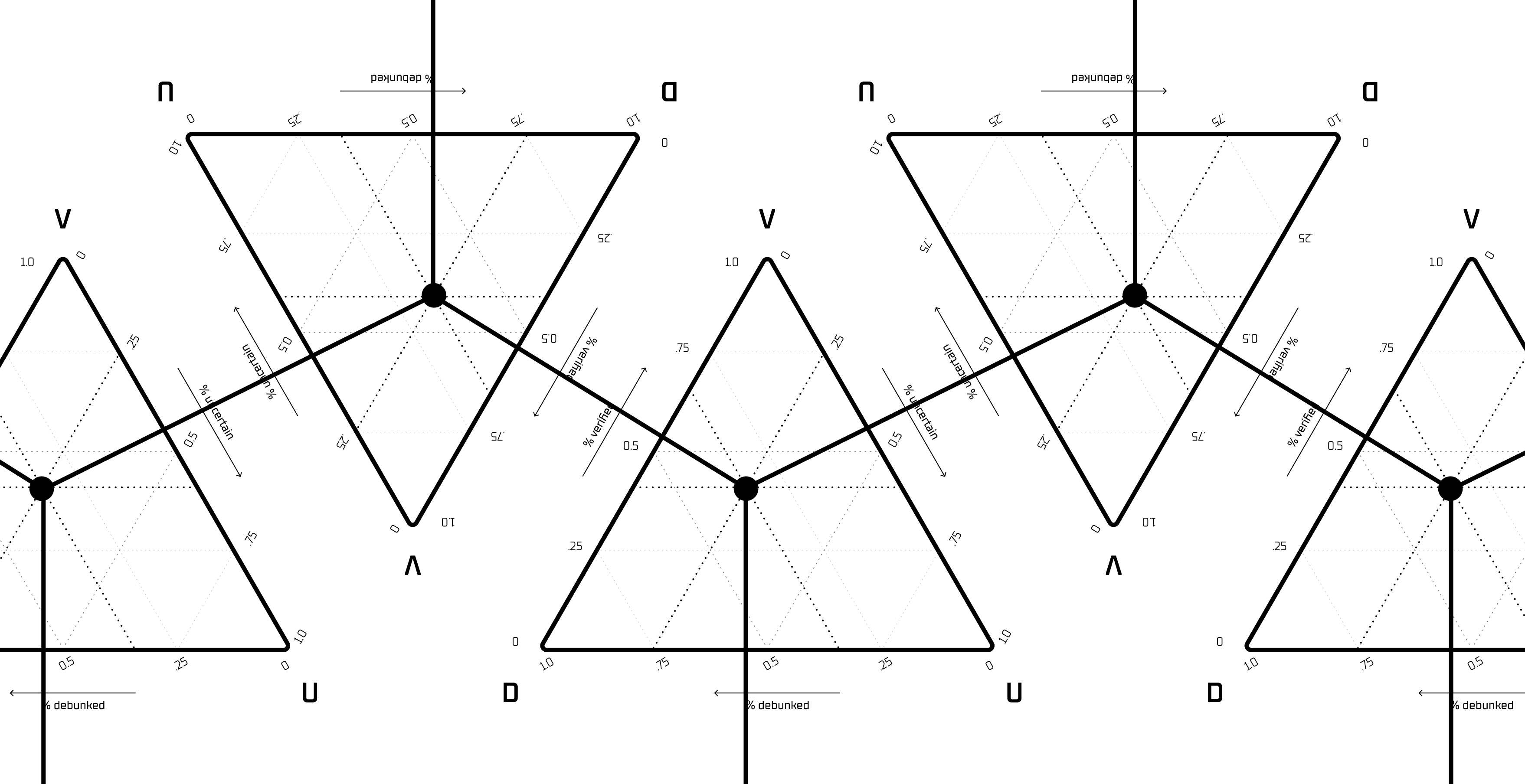

What would fix it is an infrastructure layer where belief is a coordinate, not a label — where every claim has a position, a trajectory, and a provenance chain you can trace back to the specific paper on the specific page that moved it. Where machines do the compiling, but humans can inspect the “why” and structure the disagreement. Where you can ask “what happens to this claim if I retract that paper?” and get an answer in seconds.

That is what we are building. And to build it, we need a new atomic unit of computation.

Science does not just need better synthesis. It needs belief to become inspectable, replayable, and structurally computable. The amyloid story is not an anomaly — it is a preview of what happens everywhere the infrastructure of belief is invisible.

In the next post, we introduce the atomic unit we are building for exactly that purpose — and trace the Aβ*56 story through it, step by step: The Epistron.

Footnotes

- The original formulation of the amyloid cascade — arguably the most consequential single hypothesis in neurodegenerative disease research. John Hardy and Gerald Higgins, “Alzheimer’s disease: the amyloid cascade hypothesis,” Science, 1992. doi:10.1126/science.1566067

- This paper gave the amyloid hypothesis its molecular specificity. Lesné et al., “A specific amyloid-β protein assembly in the brain impairs memory,” Nature, 2006.

- Charles Piller, “Blots on a field?” Science, 2022. Science investigation. Nature published a formal retraction note on June 24, 2024. Retraction note.

- Christian Behl, “The amyloid-cascade-hypothesis still remains a working hypothesis, no less but certainly no more,” Frontiers in Aging Neuroscience, 2024. doi:10.3389/fnagi.2024.1459224

- Kadavath et al., “Language Models (Mostly) Know What They Know,” 2022. arXiv:2207.05221

- Shai et al., “Transformers Represent Belief State Geometry in their Residual Stream,” 2024. arXiv:2405.15943

- Li et al., “Emergent World Representations,” ICLR 2023. arXiv:2210.13382. See also: Neel Nanda’s extension.